Difference between revisions of "PineCube"

(serial console: fix typo in terminal settings (15200->115200) and add voltage level) |

(→Mainlining Efforts: add Buildroot patchwork board support link) |

||

| Line 124: | Line 124: | ||

!colspan="3"|Buildroot | !colspan="3"|Buildroot | ||

|- | |- | ||

| | | Type | ||

| | | Link | ||

| Available in version | |||

|- | |||

| PineCube Board Support | |||

| https://patchwork.ozlabs.org/project/buildroot/list/?series=294245 | |||

| | | | ||

|} | |} | ||

Revision as of 10:22, 10 May 2022

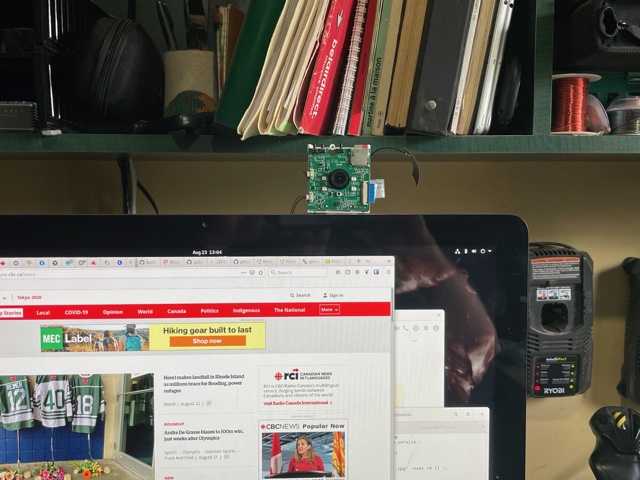

The PineCube is a small, low-powered, open source IP camera. Whether you’re a parent looking for a FOSS baby-camera, a privacy oriented shop keeper, home owner looking for a security camera, or perhaps a tinkerer needing a camera for your drone – the CUBE can be the device for you. It features an 5MPx Omnivision sensor and IR LEDs for night vision, as well as Power Over Ethernet, as well as a microphone.

Specifications

- Dimensions: 55mm x 51mm x 51.5mm

- Weight: 55g

- Storage:

- MicroSD slot, bootable

- 128Mb SPI Nor Flash, bootable

- Cameras: OV5640, 5Mpx

- CPU: Allwinner(Sochip) ARM Cortex-A7 MPCore, 800MHz

- RAM: 128MB DDR3

- I/O:

- 10/100Mbps Ethernet with passive PoE (4-18V!)

- USB 2.0 A host

- 26 pins GPIO port

- 2x 3.3V Ouptut

- 2x 5V Output

- 1x I2C

- 2x UART (3.3V)

- 2x PWM

- 1x SPI

- 1x eMMC/SDIO/SD (8-bit)

- 6x Interrupts

- Note: Interfaces are multiplexed, so they can't be all used at same time

- Internal microphone

- Network:

- WiFi

- Screen: optional 4.5" RGB LCD screen ( RB043H40T03A-IPS or DFC-XS4300240 V01 )

- Misc. features:

- Volume and home buttons

- Speakers and Microphone

- IR LEDs for night vision

- Passive infrared sensor

- Power DC in:

- 5V 1A from MicroUSB Port or GPIO port

- 4V-18V from Ethernet passive PoE

- Battery: optional 950-1600mAh model: 903048 Lithium Polymer Ion Battery Pack, can be purchase at Amazon.com

PineCube board information, schematics and certifications

- PineCube mainboard schematic:

- PineCube faceboard schematic:

- PineCube certifications:

Datasheets for components and peripherals

- Allwinner (Sochip) S3 SoC information:

- X-Powers AXP209 PMU (Power Management Unit) information:

- CMOS camera module information:

- LCD touch screen panel information:

- Lithium battery information:

- WiFi/BT module information:

- Case information:

Operating Systems

Mainlining Efforts

Please note:

- this list is most likely not complete

- no review of functionality is done here, it only serves as a collection of efforts

| Linux kernel | ||

|---|---|---|

| Type | Link | Available in version |

| Devicetree Entry Pinecube | https://lkml.org/lkml/2020/9/22/1241 | 5.10 |

| Correction for AXP209 driver | https://lkml.org/lkml/2020/9/22/1243 | 5.9 |

| Additional Fixes for AXP209 driver | https://lore.kernel.org/lkml/20201031182137.1879521-8-contact@paulk.fr/ | 5.12 |

| Device Tree Fixes | https://lore.kernel.org/lkml/20201003234842.1121077-1-icenowy@aosc.io/ | 5.10 |

| Audio Device and IR LED Fix | https://github.com/danielfullmer/pinecube-nixos/blob/master/kernel/Pine64-PineCube-support.patch | TBD |

| U-boot | ||

| Type | Link | Available in version |

| PineCube Board Support | https://patchwork.ozlabs.org/project/uboot/list/?series=210044 | v2021.04 |

| Buildroot | ||

| Type | Link | Available in version |

| PineCube Board Support | https://patchwork.ozlabs.org/project/buildroot/list/?series=294245 | |

NixOS

Buildroot

Elimo Engineering integrated support for the PineCube into Buildroot.

This has not been merged into upstream Buildroot yet, but you can find the repo on Elimo's GitHub account and build instructions in the board support directory readme. The most important thing that this provides is support for the S3's DDR3 in u-boot. Unfortunately mainline u-boot does not have that yet, but the u-boot patches from Daniel Fullmer's NixOS repo were easy enough to use on buildroot. This should get you a functional system that boots to a console on UART0. It's pretty fast too, getting there in 1.5 seconds from u-boot to login prompt.

Armbian

The Ubuntu Groovy release is an experimental, automatically generated release and it appears to support additional hardware from the other Armbian releases.

Armbian Build Image with motion [microSD Boot] [20201222]

- Armbian Ubuntu Focal build for the Pinecube with the motion (detection) package preinstalled.

- There are 2 ways to interact with the OS:

- Scan for the the device IP (with hostname pinecube)

- Use the PINE64 USB SERIAL CONSOLE/PROGRAMMER to login to the serial console, then check for assigned IP

- DD image (for 8GB microSD card and above)

- Direct download from pine64.org

- MD5 (XZip file): 61e5a6d3ab0f74ce8367c97b7f8cbb7b

- File Size: 328MGB

- Direct download from pine64.org

GitHub gist for the userpatch which pre-installs and configures the motion (detection) package.

Official Armbian builds for PineCube are available for download, once again thanks to the work of Icenowy Zheng. Although not officially supported it enables the usage of Debian and Ubuntu.

A serial console can be established with 1152008N1 (no hardware flow control). Login credentials are as usual in Armbian (login: root, password: 1234).

Motion daemon can be enabled using systemctl (With root) systemctl enable motion. Set the video settings in /etc/motion/motion.conf to 640x480 15fps YU12. Then just reboot. Note that motion currently takes considerable resources on the pinecube, so you'll want to stop it when doing things like apt upgrade and apt update with systemctl stop motion and then systemctl start motion

Serial connection using screen and the woodpecker USB serial device

First set the woodpecker's S1 jumper to 3V3. Then connect the woodpecker USB serial device to the PineCube. Pin 1 on the PineCube has a small white dot on the PCB - this should be directly next to the microusb power connection. Attach the GND pin on the woodpecker to pin 6 (GND) on the PineCube, TXD from the woodpecker to pin 10 (UART_RXD) on the PineCube, and RXD from the woodpecker to pin 8 (UART_TXD) on the PineCube.

On the host system which has the woodpecker USB serial device attached, it is possible to run screen and to communicate directly with the PineCube:

screen /dev/ttyUSB0 115200

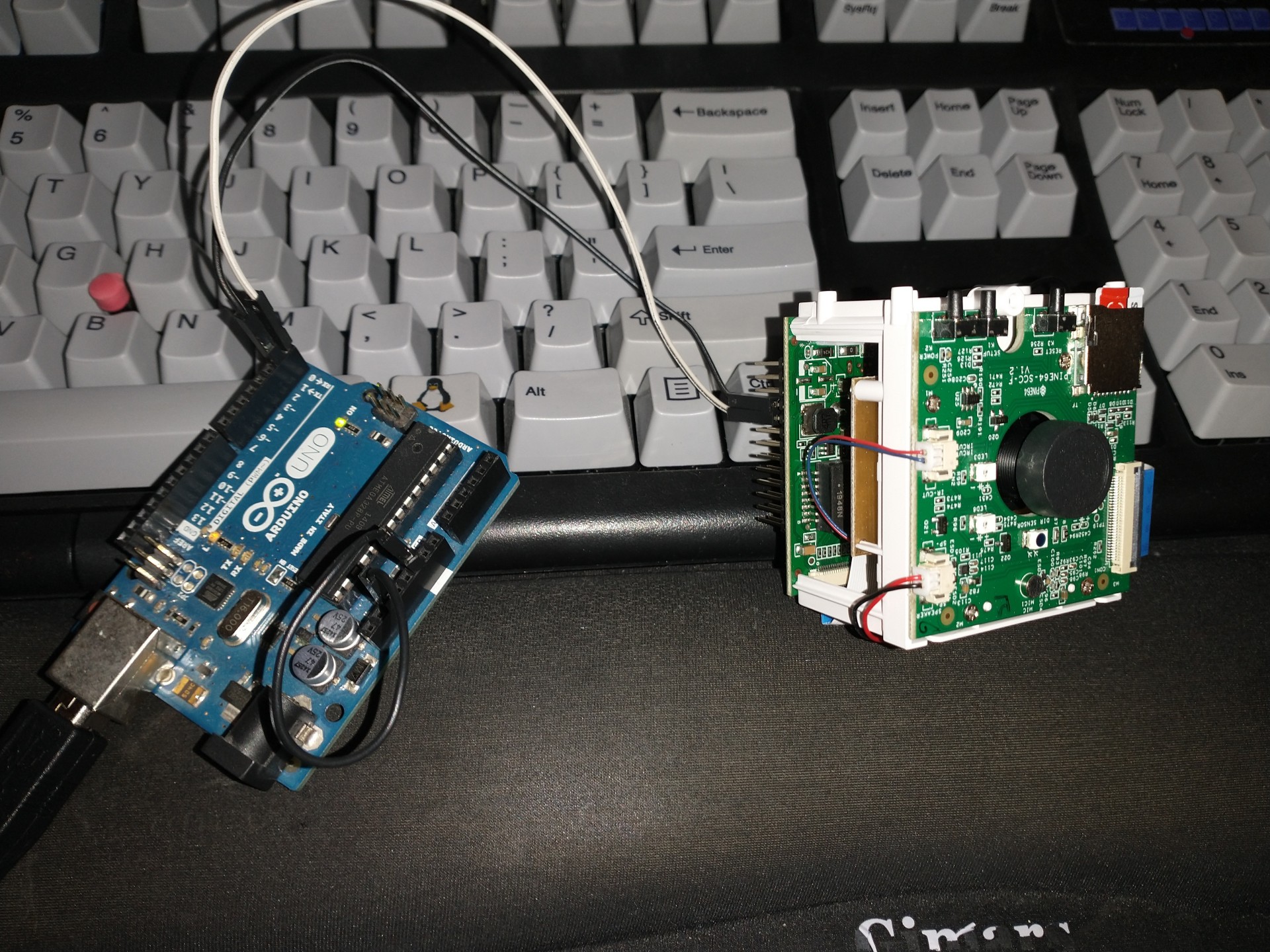

Serial connection using screen and Arduino Uno

You can use the Arduino Uno or other Arduino boards as a USB serial device.

First you must either remove the microcontroller from it's socket, or if your Arduino board does not allow this, then you can use wires to jump RESET (RST) and GND to isolate the SOC.

After this you can either use the Arduino IDE and it's Serial monitor after selecting your /dev/ttyACMx Arduino device, or screen:

screen /dev/ttyACM0 115200

Serial connection using pinephone/pinebook pro serial debugging cable

You can use the serial console USB cable for pinephone and pinebook pro at the store. With a female terminal block wire using breadboard wire into the GPIO block at the following locations in a "null modem" configuration with transmit and receive crossed between your computer and the pinecube:

S - Ground (to pin 9) R - Transmit (to pin 8) T - Receive (to pin 10)

From Linux you can access the console of the pinecube using the screen command:

screen /dev/ttyUSB0 115200

Basic bandwidth tests with iperf3

Install armbian-config:

apt install armbian-config

Enable iperf3 through the menu in armbian-config:

armbian-config

On a test computer on the same network segment run iperf3 as a client:

iperf3 -c pinecube -t 60

The same test computer, run iperf3 in the reverse direction:

iperf3 -c pinecube -t 60 -R

Performance results

Wireless network performance

The performance results reflect using the wireless network. The link speed was 72.2Mb/s using 2.462Ghz wireless. Running sixty second iperf3 tests: the observed throughput varies between 28-50Mb/s to a host on the same network segment. The testing host is connected to an Ethernet switch which is also connected to a wireless bridge. The wireless network uses WPA2 and the PineCube is connected to this wireless network bridge.

Client rate for sixty seconds:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.00 sec 293 MBytes 41.0 Mbits/sec 1 sender [ 5] 0.00-60.01 sec 291 MBytes 40.7 Mbits/sec receiver

Client rate with -R for sixty seconds:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.85 sec 263 MBytes 36.2 Mbits/sec 3 sender [ 5] 0.00-60.00 sec 259 MBytes 36.1 Mbits/sec receiver

Using WireGuard to protect the traffic between the PineCube and the test system, the performance characteristics change only slightly.

Client rate for sixty seconds with WireGuard:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.00 sec 230 MBytes 32.1 Mbits/sec 0 sender [ 5] 0.00-60.09 sec 229 MBytes 32.0 Mbits/sec receiver

Client rate with -R for sixty seconds with WireGuard:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.14 sec 246 MBytes 34.3 Mbits/sec 7 sender [ 5] 0.00-60.00 sec 245 MBytes 34.2 Mbits/sec receiver

Wired network performance

The Ethernet network does not work in the current Ubuntu Focal Armbian image or the Ubuntu Groovy Armbian image.

The performance results reflect using the Ethernet network. The link speed was 100Mb/s using a 1000Mb/s prosumer switch. Running sixty second iperf3 tests: the observed throughput varies between 92-102Mb/s to a host on the same network segment. The testing host is connected to the same Ethernet switch which is also connected to the PineCube.

Client rate for sixty seconds:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.00 sec 675 MBytes 94.4 Mbits/sec 0 sender [ 5] 0.00-60.01 sec 673 MBytes 94.0 Mbits/sec receiver

Client rate with -R for sixty seconds:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.00 sec 673 MBytes 94.1 Mbits/sec 0 sender [ 5] 0.00-60.00 sec 673 MBytes 94.1 Mbits/sec receiver

Using WireGuard to protect the traffic between the PineCube and the test system, the performance characteristics change only slightly.

Client rate for sixty seconds with WireGuard:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.00 sec 510 MBytes 71.2 Mbits/sec 0 sender [ 5] 0.00-60.01 sec 509 MBytes 71.1 Mbits/sec receiver

Client rate with -R for sixty seconds with WireGuard:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-60.01 sec 642 MBytes 89.8 Mbits/sec 0 sender [ 5] 0.00-60.00 sec 641 MBytes 89.7 Mbits/sec receiver

Streaming the camera to the network

In this section we document a variety of ways to stream video to the network from the PineCube. Unless specified otherwise, all of these examples have been tested on Ubuntu groovy (20.10). See this small project for the pinecube for easy to use programs tuned for the PineCube.

In the examples which use h264, we are currently encoding using the x264 library which is not very fast on this CPU. The SoC in the PineCube does have a hardware h264 encoder, which the authors of these examples have so far not tried to use. It appears that https://github.com/gtalusan/gst-plugin-cedar might provide easy access to it, however. Please update this wiki if you find out how to use the hardware encoder!

gstreamer: h264 HLS

HLS (HTTP Live Streaming) has the advantage that it is easy to play in any modern web browser, including Android and iPhone devices, and that it is easy to put an HTTP caching proxy in front of it to scale to many viewers. It has the disadvantages of adding (at minimum) several seconds of latency, and of requiring an h264 encoder (which we have in hardware, but haven't figured out how to use yet, so, we're stuck with the slow software one).

HLS segments a video stream into small chunks which are stored as .ts (MPEG Transport Stream) files, and (re)writes a playlist.m3u8 file which clients constantly refresh to discover which .ts files they should download. We use a tmpfs file system to avoid needing to write these files to the sdcard in the PineCube. Besides the program which writes the .ts and .m3u8 files (gst-launch-1.0, in our case), we'll also need a very basic web page in tmpfs and a webserver to serve the files.

Create an hls directory to be shared in the existing tmpfs file system that is mounted at /dev/shm:

mkdir /dev/shm/hls/

Create an index.html and optionally a favicon.ico or even a set of icons, and then put the files into the /dev/shm/hls directory. An example index.html that works is available in the Getting Started section of the README for hls.js. We recommend downloading the hls.js file and editing the example index.html to serve your local copy of it instead of fetching it from a CDN. This file provides HLS playback capabilities in browsers which don't natively support it (which is most browsers aside from the iPhone).

In one terminal, run the camera capture pipeline:

cd /dev/shm/hls/ &&

media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:UYVY8_2X8/240x320@1/15]' &&

gst-launch-1.0 v4l2src ! video/x-raw,width=320,height=240,format=UYVY,framerate=15/1 ! decodebin ! videoconvert ! video/x-raw,format=I420 ! clockoverlay ! timeoverlay valignment=bottom ! x264enc speed-preset=ultrafast tune=zerolatency ! mpegtsmux ! hlssink target-duration=1 playlist-length=2 max-files=3

Alternatively it is possible to capture at a higher resolution:

media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:UYVY8_2X8/1920x1080@1/15]'

cd /dev/shm/hls/ && gst-launch-1.0 v4l2src ! video/x-raw,width=1920,height=1080,format=UYVY,framerate=15/1 ! decodebin ! videoconvert ! video/x-raw,format=I420 ! clockoverlay ! timeoverlay valignment=bottom ! x264enc speed-preset=ultrafast tune=zerolatency ! mpegtsmux ! hlssink target-duration=1 playlist-length=2 max-files=3

In another, run a simple single threaded webserver which will serve html, javascript, and HLS to web clients:

cd /dev/shm/hls/ && python3 -m http.server

Alternately, install a more efficient web server (apt install nginx) and set the server root for the default configuration to be /dev/shm/hls. This will run on port 80 rather than the python3 server which defaults to port 8000.

It should be possible to view the HLS stream directly in a web browser by visiting http://pinecube:8000/ if pinecube is the correct hostname and the name correctly resolves.

You can also view the HLS stream with VLC: vlc http://pinecube:8000/playlist.m3u8

Or with gst-play-1.0: gst-play-1.0 http://pinecube:8000/playlist.m3u8 (or with mpv, ffplay, etc)

To find out about other options you can configure in the hlssink gstreamer element, you can run gst-inspect-1.0 hlssink.

It is worth noting here that the hlssink element in GStreamer is not widely used in production environments. It is handy for testing, but for real-world free-software HLS live streaming deployments the standard tool today (January 2021) is nginx's RTMP module which can be used with ffmpeg to produce "adaptive streams" which are reencoded at varying quality levels. You can send data to an nginx-rtmp server from a gstreamer pipeline using the rtmpsink element. It is also worth noting that gstreamer has a new hlssink2 element which we have not tested; perhaps in the future it will even have a webserver!

v4l2rtspserver: h264 RTSP

Install dependencies:

apt install -y cmake gstreamer1.0-plugins-bad gstreamer1.0-tools \

gstreamer1.0-plugins-good v4l-utils gstreamer1.0-alsa alsa-utils libpango1.0-0 \

libpango1.0-dev gstreamer1.0-plugins-base gstreamer1.0-x x264 \

gstreamer1.0-plugins-{good,bad,ugly} liblivemedia-dev liblog4cpp5-dev \

libasound2-dev vlc libssl-dev iotop libasound2-dev liblog4cpp5-dev \

liblivemedia-dev autoconf automake libtool v4l2loopback-dkms liblog4cpp5-dev \

libvpx-dev libx264-dev libjpeg-dev libx265-dev linux-headers-dev-sunxi;

Install kernel source and build v4l2loopback module:

apt install linux-source-5.11.3-dev-sunxi64 #Adjust kernel version number to match current installation with "uname -r" cd /usr/src/v4l2loopback-0.12.3; make && make install && depmod -a

Build required v4l2 software:

git clone --recursive https://github.com/mpromonet/v4l2tools && cd v4l2tools && make && make install; git clone --recursive https://github.com/mpromonet/v4l2rtspserver && cd v4l2rtspserver && cmake -D LIVE555URL=https://download.videolan.org/pub/contrib/live555/live.2020.08.19.tar.gz . && make && make install;

Running the camera:

media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:UYVY8_2X8/640x480@1/30]'; modprobe v4l2loopback video_nr=10 debug=2; v4l2compress -fH264 -w -vv /dev/video0 /dev/video10 & v4l2rtspserver -v -S -W 640 -H 480 -F 10 -b /usr/local/share/v4l2rtspserver/ /dev/video10

Note that you might get an error when running media-ctl indicating that the resource is busy. This could be because of the motion program that runs on the stock OS installation. Check and terminate any running /usr/bin/motion processes before running the above steps.

The v4l2compress/v4l2rtspserver method of streaming the camera uses around ~45-50% of the CPU for compression of the stream into H264 (640x480@7fps) and around 1-2% of the CPU for serving the HLS stream. Total system RAM used is roughly 64MB and the load average is ~0.4-~0.5 when idle, and ~0.51-~0.60 with one HLS client streaming the camera.

You'll probably see about a 2-3s lag with this approach, possibly due to the H264 compression and the lack of hardware acceleration at the moment.

gstreamer: JPEG RTSP

GStreamer's RTSP server isn't an element you can use with gst-launch, but rather a library. We failed to build its example program, so instead used this very small 3rd party tool which is based on it: https://github.com/sfalexrog/gst-rtsp-launch/

After building gst-rtsp-launch (which is relatively simple on Ubuntu groovy; just apt install libgstreamer1.0-dev libgstrtspserver-1.0-dev first), you can read JPEG data directly from the camera and stream it via RTSP: media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:JPEG_1X8/1280x720]' && gst-rtsp-launch 'v4l2src ! image/jpeg,width=1280,height=720 ! rtpjpegpay name=pay0'

This stream can be played using vlc rtsp://pinecube.local:8554/video or mpv, ffmpeg, gst-play-1.0, etc. If you increase the resolution to 1920x1080, mpv and gst-play can still play it, but VLC will complain The total received frame size exceeds the client's buffer size (2000000). 73602 bytes of trailing data will be dropped! if you don't tell it to increase its buffer size with --rtsp-frame-buffer-size=300000.

gstreamer: h264 RTSP

Left as an exercise to the reader (please update the wiki). Hint: involves bits from the HLS and the JPEG RTSP examples above, but needs a rtph264pay name=pay0 element.

gstreamer: JPEG RTP UDP

Configure camera: media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:JPEG_1X8/1920x1080]'

Transmit with: gst-launch-1.0 v4l2src ! image/jpeg,width=1920,height=1080 ! rtpjpegpay name=pay0 ! udpsink host=$client_ip port=8000

Receive with: gst-launch-1.0 udpsrc port=8000 ! application/x-rtp, encoding-name=JPEG,payload=26 ! rtpjpegdepay ! jpegdec ! autovideosink

Note that the sender must specify the recipient's IP address in place of $client_ip; this can actually be a multicast address allowing for many receivers! (You'll need to specify a valid multicast address in the receivers' pipeline also; see gst-inspect-1.0 udpsrc and gst-inspect-1.0 udpsink for details.)

gstreamer: JPEG RTP TCP

Configure camera: media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:JPEG_1X8/1920x1080]'

Transmit with: gst-launch-1.0 v4l2src ! image/jpeg,width=1920,height=1080 ! rtpjpegpay name=pay0 ! rtpstreampay ! tcpserversink host=0.0.0.0 port=1234

Receive with: gst-launch-1.0 tcpclientsrc host=pinecube.local port=1234 ! application/x-rtp-stream,encoding-name=JPEG ! rtpstreamdepay ! application/x-rtp, media=video, encoding-name=JPEG ! rtpjpegdepay ! jpegdec ! autovideosink

gstreamer and socat: MJPEG HTTP server

This rather ridiculous method uses bash, socat, and gstreamer to implement an HTTP-ish server which will serve your video as an MJPEG stream which is playable in browsers.

This approach has the advantage of being relatively low latency (under a second), browser-compatible, and not needing to reencode anything on the CPU (it gets JPEG data from the camera itself). Compared to HLS, it has the disadvantages that MJPEG requires more bandwidth than h264 for similar quality, pause and seek are not possible, stalled connections cannot jump ahead when they are unstalled, and, in the case of this primitive implementation, it only supports one viewer at a time. (Though, really, the RTSP examples on this page perform very poorly with multiple viewers, so...)

Gstreamer can almost do this by itself, as it has a multipartmux element which produces the headers which precede each frame. But sadly, despite various forum posts lamenting the lack of one over the last 12+ years, as of the end of the 50th year of the UNIX era (aka 2020), somehow nobody has yet gotten a webserver element merged in to gstreamer (which is necessary to produce the HTTP response, which is required for browsers other than firefox to play it). So, here is an absolutely minimal "webserver" which will get MJPEG displaying in a (single) browser.

Create a file called mjpeg-response.sh:

#!/bin/bash media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:JPEG_1X8/1920x1080]' b="--duct_tape_boundary" echo -en "HTTP/1.1 200 OK\r\nContent-type: multipart/x-mixed-replace;boundary=$b\r\n\r\n" gst-launch-1.0 v4l2src ! image/jpeg,width=1920,height=1080 ! multipartmux boundary=$b ! fdsink fd=2 2>&1 >/dev/null

Make it executable: chmod +x mjpeg-response.sh

Run the server: socat TCP-LISTEN:8080,reuseaddr,fork EXEC:./mjpeg-response.sh

And browse to http://pinecube.local:8080/ in your browser.

virtual web camera: gstreamer, mjpeg, udp rtp unicast

It's possible to set up the pinecube as a virtual camera video device (Video 4 Linux) so that you can use it with video conferencing software, such as Jitsi Meet. Note that this has fairly minimal (<1s) lag when tested on a wired 1Gb ethernet network connection and the frame rate is passable. MJPEG is very wasteful in terms of network resources, so this is something to keep in mind. The following instructions assume Debian Linux (Bullseye) as your desktop machine, but could work with other Linux OSes too. It's possible that someday a similar system could work with Mac OS X provided that someone writes a gstreamer plugin that exposes a Mac OS Core Media DAL device as a virtual webcam, like they did here for OBS.

First, you will need to set up the pinecube with gstreamer much like the above gstreamer, but in 1280x720 resolution. Also, you will be streaming to the desktop machine using UDP, with IP address represented by $desktop below at UDP port 8000.

media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:JPEG_1X8/1280x720]' gst-launch-1.0 v4l2src device=/dev/video0 ! image/jpeg,width=1280,height=720,framerate=30/1 ! rtpjpegpay name=pay0 ! udpsink host=$desktop port=8000

On your desktop machine, you will need to install the gstreamer suite and the special v4l2loopback kernel module to bring the mjpeg stream to the Video 4 Linux device /dev/video10.

sudo apt install gstreamer1.0-tools gstreamer1.0-plugins-base gstreamer1.0-plugins-good gstreamer1.0-plugins-bad gstreamer1.0-plugins-ugly v4l2loopback-dkms sudo modprobe v4l2loopback video_nr=10 max_buffers=32 exclusive_caps=1 # Creates /dev/video10 as a virtual v4l2 device, allocates increased buffers and exposes exclusive capabilities for chromium to find the video device gst-launch-1.0 udpsrc port=8000 ! application/x-rtp, encoding-name=JPEG,payload=26,framerate=30/1 ! rtpjpegdepay ! jpegdec ! video/x-raw, format=I420, width=1280, height=720 ! autovideoconvert ! v4l2sink device=/dev/video10

The most common error found when launching the gstreamer pipeline above is the following error message, which seems to happen when the max_buffers aren't set on the v4l2loopback module (see above), or if there is a v4l client (vlc, chromium) already connected to /dev/video10 when starting the pipeline. There does seem to be a small level of instability in this stack that could be improved.

gstbasesrc.c(3055): gst_base_src_loop (): /GstPipeline:pipeline0/GstUDPSrc:udpsrc0: streaming stopped, reason not-negotiated (-4)

Now that you have /dev/video10 hooked into the gstreamer pipeline you can then connect to it using VLC. VLC is a good local test that things are working. You can view the stream like this. Note that you could do the same thing with mpv/ffmpeg, but there are problems currently.

vlc v4l2:///dev/video10

Be sure to disconnect vlc before trying to use the virtual web camera with chromium. Launch chromium and go to a web conference like jitsi. When it prompts you for the camera pick the "Dummy Video Device..." and it should be much like what you see in vlc. Note that firefox isn't really working at this moment and the symptoms appear very similar to the problem with mpv/ffmpeg mentioned above, ie. when they connect to the camera they show only the first frame and then drop. It's unclear whether the bug is in gstreamer, v4l, or ffmpeg (or somewhere in these instructions).

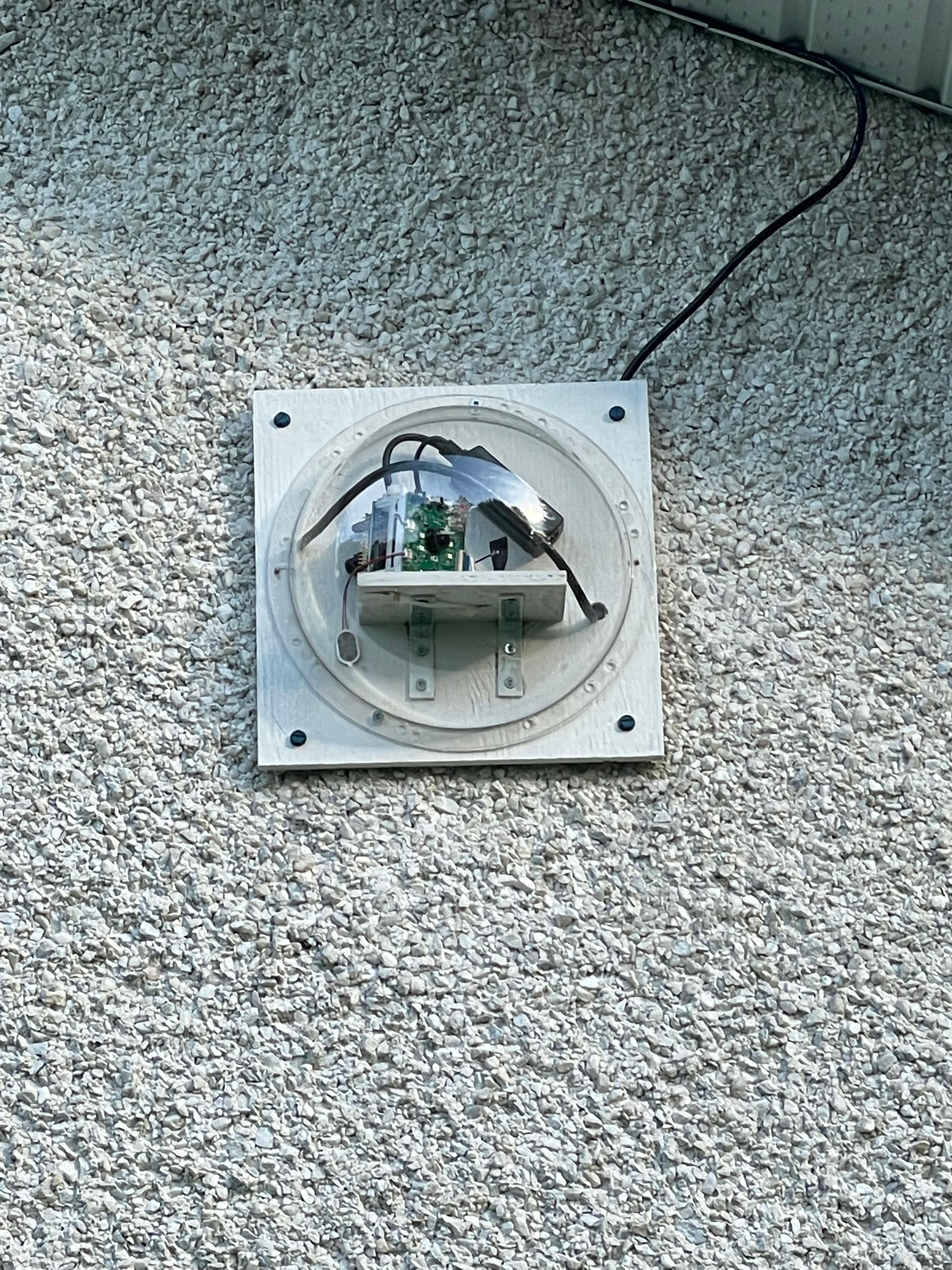

PineCube as a security camera with Motion

It's possible to use the pinecube as an inside or outside security camera using motion. For outside, you'll need an enclosure with a transparent dome to protect from the weather. One suggestion is to mount the camera with the lens as close as possible to the dome to avoid reflection.

The Motion package can be installed in a variety of Linux flavours. There's a package in the standard Ubuntu and Debian repositories and works with Armbian. It provides a very simple web interface for live viewing of the camera feed and also has motion trigger capabilities to store either still pictures or in later versions videos. Note that it is also possible to build hooks to automatically process or upload those recordings.

To get things working quickly with motion you can set the following in the /etc/motion/motion.conf and start it with "sudo /etc/init.d/motion start"

v4l2_palette 14 # UYVY8 width 640 height 480 framerate 15

This mode and resolution works fine with Motion and works well with video motion capture (Motion version >= 4.2.2). However, if you want different modes and resolutions you'll need to set the camera to those modes with the media-ctl tool that comes with the v4l-utils package. That will need to be set before the motion service starts. A simple method to ensure that it gets set before motion starts every time, even across reboots, is to make a small modification to the /lib/systemd/system/motion.service

[Service] Type=simple User=motion ExecStartPre=/usr/bin/media-ctl --set-v4l2 '"ov5640 1-003c":0[fmt:UYVY8_2X8/1280x720@1/15]' # <=- Add this line here with the mode that the camera will use ExecStart=/usr/bin/motion

Note that you must modify /etc/motion/motion.conf to match the v4l2_palette, width, height and framerate to match the mode you set with media-ctl. See the Motion documentation to match the v4l2_palette to the mode. Here are a list of modes that have been tried so far.

UYVY8_2X8/640x480@1/30 UYVY8_2X8/640x480@1/15 UYVY8_2X8/1280x720@1/15 # This one seems to be fine for live viewing, but causes performance problems when using Motion to capture videos JPEG_1X8/1280x720@1/15

PineCube as a WiFi AP

If the PineCube will have a wired ethernet connection to the main network it is possible to use it as a WiFi access point, possibly extending your existing network to further outside. Here are some steps you can take to do this starting from an Armbian system as a starting point. Note that you may need to upgrade your kernel to 5.13.x for this to work well.

- Install bridge-utils package using apt-get

- Add the following to your /etc/network/interfaces to set up both the eth0 ethernet interface and the br0 bridge interface (change br0 to manual if static IP is preferred)

/etc/network/interfaces:

auto eth0

iface eth0 inet manual

pre-up /sbin/ifconfig $IFACE up

pre-down /sbin/ifconfig $IFACE down

auto br0

iface br0 inet dhcp

bridge_ports eth0

bridge_stp on

- Edit the /etc/default/hostapd uncommenting the line with 'DAEMON_CONF="/etc/hostapd.conf"'

- Edit the /etc/hostapd.conf to set the SSID, password and channel for your AP.

- Run

sudo systemctl enable hostapd.serviceto enable the hostapd service on startup - Reboot your cube with the ethernet cable connected

PineCube as a webcam

The PineCube can be powered by the host and communicate as a peripheral. First, you'll need to a dual USB-A (male) cable to plug it into your computer. Note that the Micro-USB port can be used only for power because the data lines are not connected.

TBD:

-Kernel patches applied from here (perhaps already available in NixOS): https://github.com/danielfullmer/pinecube-nixos/blob/master/kernel/Pine64-PineCube-support.patch -Additional patch to pinecube device tree disable ehci0 and ohci0, enabling usb_otg device instead and setting dr_mode to otg -Instructions for sunxi and ethernet gadget: https://linux-sunxi.org/USB_Gadget/Ethernet -Add sunxi and g_ether to /etc/modules to get them to load on startup -Configure the g_ether device to start with a stable MAC address /etc/modprobe.d/g_ether.conf: options g_ether host_addr=f6:11:fd:ed:ec:6e -Set a static IP address for usb0 on startup with network manager (/etc/network/interfaces) auto usb0 iface usb0 inet static address 192.168.10.2 netmask 255.255.255.0 -Boot the pinecube plugging it into a computer -Configure the USB ethernet device on the computer to be in the same subnet as the pinecube

-Attempt to load the uvc_gadget (usb_f_uvc) or g_webcam -Look at this project to see if it can bridge UVC gadget output with the v4l from the OV5650 camera sensor https://github.com/wlhe/uvc-gadget

PineCube as a recorder for loud noises

If you have a kernel that has the sound support (see the Sound Control section) then you can use it to make recordings when there is a noise above a certain threshold. The following script is a very simple example that uses the alsa-utils and the sox command to do this. You can use the noise-stats.txt file and some noise testing to figure out a good threshold for your camera.

#!/bin/bash

# Directory where the sound recordings should go

NOISE_FILE_DIR="/root/noises"

# Threshold to use with the mean delta to decide to preserve the recording

MEAN_DELTA_THRESHOLD="0.002"

# Sample length (in seconds)

SAMPLE_LENGTH="10"

while :

do

stats=$(arecord -d "$SAMPLE_LENGTH" -f S16_LE > /tmp/sample.wav 2>/dev/null && sox -t .wav /tmp/sample.wav -n stat 2>&1 | grep 'Mean delta:' | cut -d: -f2 | sed 's/^[ ]*//')

ts=$(date +%s)

if (( $(echo "$stats > $MEAN_DELTA_THRESHOLD" | bc -l) )); then

mv /tmp/sample.wav "$NOISE_FILE_DIR/noise-$ts.wav" # TODO convert to mp3

fi

rm -f /tmp/sample.wav

echo "$ts $stats" >> noise-stats.txt

done

Debugging camera issues with the gstreamer pipeline

If the camera does not appear to work, it is possible to change the v4l2src to videotestsrc and the gstreamer pipeline will produce a synthetic test image without using the camera hardware.

If the camera is only sensor noise lines over a black or white image, the camera may be in a broken state. When in that state, the following kernel messages were observed:

[ 1703.577304] alloc_contig_range: [46100, 464f5) PFNs busy [ 1703.578570] alloc_contig_range: [46200, 465f5) PFNs busy [ 1703.596924] alloc_contig_range: [46300, 466f5) PFNs busy [ 1703.598060] alloc_contig_range: [46400, 467f5) PFNs busy [ 1703.600480] alloc_contig_range: [46400, 468f5) PFNs busy [ 1703.601654] alloc_contig_range: [46600, 469f5) PFNs busy [ 1703.619165] alloc_contig_range: [46100, 464f5) PFNs busy [ 1703.619528] alloc_contig_range: [46200, 465f5) PFNs busy [ 1703.619857] alloc_contig_range: [46300, 466f5) PFNs busy [ 1703.641156] alloc_contig_range: [46100, 464f5) PFNs busy

Camera Adjustments

Focus

The focus of the lens can be manually adjusted through rotation. Note that initially, the lens could be tight.

Low light mode

To get imagery in low-light conditions you can turn on the infrared LED's to light up the dark area and also enable the IR cut filter using the commands below. Note that these were performed on Armbian using the instructions from here [1].

# Run these as root # Turn on the IR LED lights (note that you can see a faint red glow from them when it's low light) # Turn them off with echo 1 instead (this may be inverted depending on the version of the kernel you have) # echo 0 > /sys/class/leds/pine64\:ir\:led1/brightness # echo 0 > /sys/class/leds/pine64\:ir\:led2/brightness # Export gpio, set direction # echo 45 > /sys/class/gpio/export # echo out > /sys/class/gpio/gpio45/direction # Enable IR cut filter (note that you can hear the switching noise) # Disable with echo 0 instead # echo 1 > /sys/class/gpio/gpio45/value

Camera controls

It is possible to adjust the camera using certain internal camera controls, such as contrast, brightness, saturation and more. These controls can be accessed using the v4l2-ctl tool that is part of the v4l-utils package.

# List the current values of the controls

v4l2-ctl -d /dev/v4l-subdev* --list-ctrls

User Controls

contrast 0x00980901 (int) : min=0 max=255 step=1 default=0 value=0 flags=slider

saturation 0x00980902 (int) : min=0 max=255 step=1 default=64 value=64 flags=slider

hue 0x00980903 (int) : min=0 max=359 step=1 default=0 value=0 flags=slider

white_balance_automatic 0x0098090c (bool) : default=1 value=1 flags=update

red_balance 0x0098090e (int) : min=0 max=4095 step=1 default=0 value=0 flags=inactive, slider

blue_balance 0x0098090f (int) : min=0 max=4095 step=1 default=0 value=0 flags=inactive, slider

exposure 0x00980911 (int) : min=0 max=65535 step=1 default=0 value=4 flags=inactive, volatile

gain_automatic 0x00980912 (bool) : default=1 value=1 flags=update

gain 0x00980913 (int) : min=0 max=1023 step=1 default=0 value=20 flags=inactive, volatile

horizontal_flip 0x00980914 (bool) : default=0 value=0

vertical_flip 0x00980915 (bool) : default=0 value=0

power_line_frequency 0x00980918 (menu) : min=0 max=3 default=1 value=1

Camera Controls

auto_exposure 0x009a0901 (menu) : min=0 max=1 default=0 value=0 flags=update

Image Processing Controls

pixel_rate 0x009f0902 (int64) : min=0 max=2147483647 step=1 default=61430400 value=21001200 flags=read-only

test_pattern 0x009f0903 (menu) : min=0 max=4 default=0 value=0

# Set the contrast controls to the maximum value

v4l2-ctl -d /dev/v4l-subdev* --set-ctrl contrast=255

You can see which flags can be changed and which ones cannot by looking at the flags. The inactive flag indicates that it is currently disabled. Some of these flags are disabled when other flags are turned on. For example, the gain flag above is inactive because gain_automatic is enabled with a value of "1." Note that at the current time the auto_exposure flag is inverted, so a value of "0" means on, while "1" means off. Maybe the auto_exposure flag will get changed someday. You'll need to turn off auto_exposure (value=1) if you want to manually set the exposure flag.

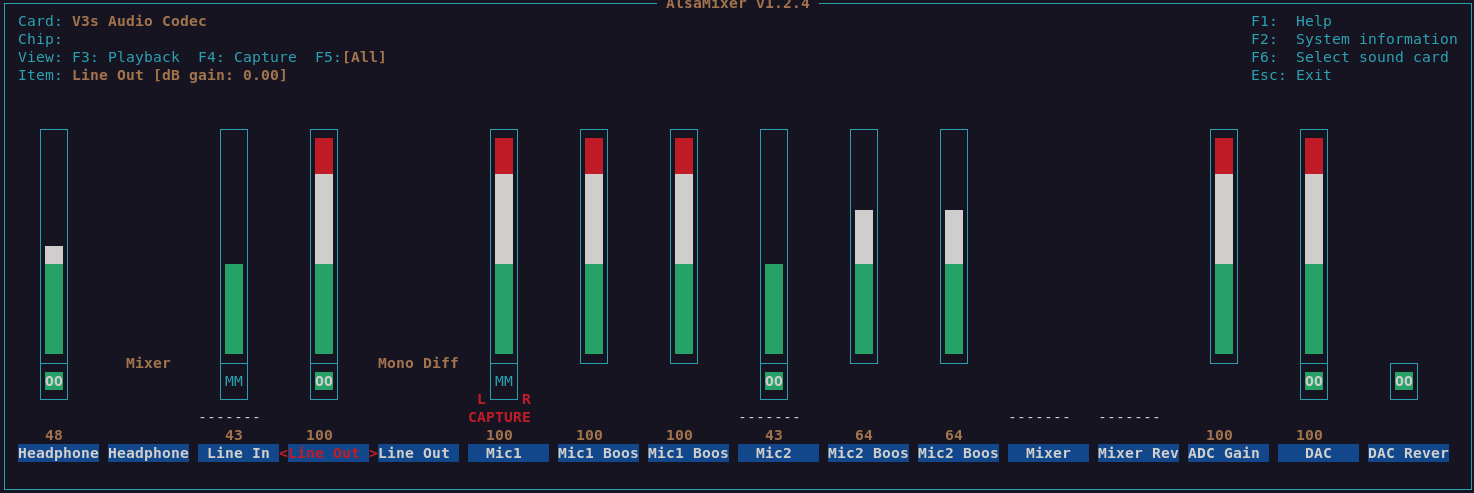

Sound Controls

Note that sound is only currently available with special patches on top of a 5.13.13 or higher kernel with Armbian or NixOS. Once you have a kernel that supports sound you can install alsa-utils to get the alsamixer tool. The following mixer settings have been found to work with both playback and record. Note that you'll need to press F5 to get the capture controls and space bar to turn on/off capture for a device. The speaker dangles on a wire from the device. The microphone is located about 1cm below the lens on the front facing circuit board.

SDK

Stock Linux

- Direct Download from pine64.org

- MD5 (7zip file): efac108dc98efa0a1f5e77660ba375f8

- File Size: 3.50GB

How to compile

You can either setup a machine for the build environment, or use a Vagrant virtual machine provided by Elimo Engineering

On a dedicated machine

Recommended system requirements:

- OS: (L)Ubuntu 16.04

- CPU: 64-bit based

- Memory: 8 GB or higher

- Disk: 15 GB free hard disk space

Install required packages

sudo apt-get install p7zip-full git make u-boot-tools libxml2-utils bison build-essential gcc-arm-linux-gnueabi g++-arm-linux-gnueabi zlib1g-dev gcc-multilib g++-multilib libc6-dev-i386 lib32z1-dev

Install older Make 3.82 and Java JDK 6

pushd /tmp wget https://ftp.gnu.org/gnu/make/make-3.82.tar.gz tar xfv make-3.82.tar.gz cd make-3.82 ./configure make sudo apt purge -y make sudo ./make install cd .. # Please, download jdk-6u45-linux-x64.bin from https://www.oracle.com/java/technologies/javase-java-archive-javase6-downloads.html (requires free login) chmod +x jdk-6u45-linux-x64.bin ./jdk-6u45-linux-x64.bin sudo mkdir /opt/java/ sudo mv jdk1.6.0_45/ /opt/java/ sudo update-alternatives --install /usr/bin/javac javac /opt/java/jdk1.6.0_45/bin/javac 1 sudo update-alternatives --install /usr/bin/java java /opt/java/jdk1.6.0_45/bin/java 1 sudo update-alternatives --install /usr/bin/javaws javaws /opt/java/jdk1.6.0_45/bin/javaws 1 sudo update-alternatives --config javac sudo update-alternatives --config java sudo update-alternatives --config javaws popd

Unpack SDK and then compile and pack the image

7z x 'PineCube Stock BSP-SDK ver1.0.7z' mv 'PineCube Stock BSP-SDK ver1.0' pinecube-sdk cd pinecube-sdk/camdroid source build/envsetup.sh lunch mklichee make -j3 pack

Using Vagrant

You can avoid setting up your machine and just use Vagrant to spin up a development environment in a VM.

Just clone the Elimo Engineering repo and follow the instructions in the readme file

After spinning up the VM, you just need to run the build:

cd pinecube-sdk/camdroid source build/envsetup.sh lunch mklichee make -j3 pack

Community Projects

Share your project with a PineCube here!

| Project Homepage | Project Source | PineCube Implementations | |

|---|---|---|---|

| openWRT | JuanEst | juansef packaes | Pine64 Forum thread |

|}